AI systems are used across multiple industries, including banking, healthcare, public services, aerospace, defense, etc. However, when these systems fail or introduce bias, it can lead to disaster. For example, in July 2025, an AI coding assistant from Replit deleted the production database, which was unacceptable.

To address such risks and control AI systems’ behavior, the European Union introduced the EU AI Act. It defines clear rules for how AI systems must be built and used, and how system requirements should be tracked to build a safe and reliable system.

As a result, it becomes important to capture all regulatory requirements defined by the EU AI Act as system requirements, which changes the whole requirements engineering process for AI software development.

Now, let’s understand what the EU AI Act covers and how it impacts requirements engineering.

What Is the EU AI Act?

The EU AI Act is the first law in the world that governs artificial intelligence across an entire economy. It defines rules and regulations that must be followed during AI system development and deployment to launch them in the European market. These rules are about:

- Handling data quality to train AI models

- Preparing technical documentations

- Automatic logging and traceability management

- Managing risks through impact assessment

- Post-market monitoring

The main goal of the EU AI Act is to reduce the risk that AI can create for people, businesses, and society. It ensures that AI software respects users’ privacy, protects their health and safety, and avoids bias or any failure scenarios.

So, organizations developing AI solutions that affect people’s rights, safety, or access to services must follow the EU AI Act.

Quick note: Non-compliance with the EU AI Act can lead to fines up to €35 million or 7 percent of global annual revenue, whichever is higher.

Why the EU AI Act Is Now a Requirements Engineering Issue

The EU AI Act introduces multiple obligations, such as traceability of requirements, transparency of system behavior, and a human oversight mechanism, that directly affect AI systems’ behavior. So, these obligations must be defined and implemented within the system development process.

This shift connects compliance to requirements engineering. So, legal clauses should not be implemented separately but must be translated into actionable functional and non-functional requirements that can be directly implemented by design, development, and testing teams while adhering to the AI Act.

For example:

- Risk management -> To cover it, define requirements for risk scoring, threshold alerts, and automated risk handling.

- Data quality -> Define non-functional requirements for data set completeness and bias checks.

- Transparency -> Define requirements to trace changes, decisions, and user disclosures.

Also, regulations demand a controlled development process. Teams must manage versions of requirements and end-to-end traceability to get visibility into how compliance, requirements, tests, and risks are connected.

In short, without structured requirements management, it becomes hard for teams to meet obligations defined in the EU AI Act.

Overview of EU AI Act Risk Categories

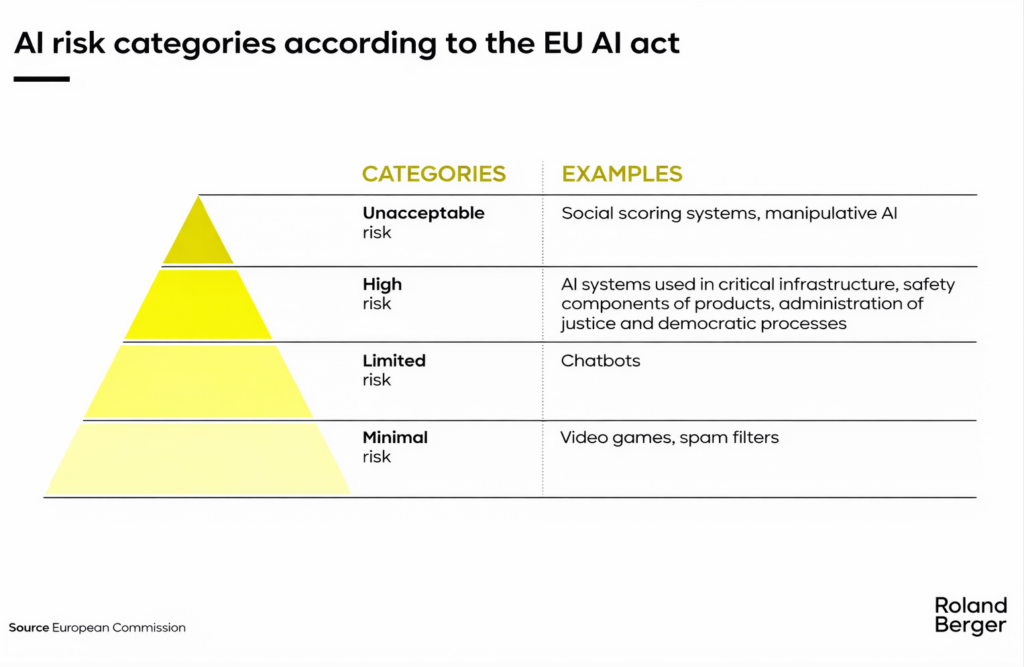

The EU AI Act uses the risk-based framework. Instead of regulating every AI system equally, it classifies them into four categories, given below, based on the risk they introduce. These categories are covered in Article 6 of the EU AI Act.

Unacceptable Risk (Prohibited)

AI systems that manipulate human behavior or systems that subtly manipulate user choices in financial decisions are considered harmful and introduce high risks. These AI systems can influence behavior or restrict rights without transparency or consent. So, they are considered harmful and not allowed in the European market.

High-Risk AI Systems

High-risk AI systems are permitted in the EU market, but they must implement a strict requirements management process, ensure data quality, and prepare technical documentation. Organizations also need to implement logging, traceability, and human oversight mechanisms while developing high-risk AI systems.

The systems might include:

- AI models are used to filter and rank job applicants

- credit scoring engines used by banks to approve loans

- medical AI systems that assist in detecting diseases from scans

Limited Risk

These systems are less risky. They must provide transparency but are not required to meet all obligations. Examples of systems with limited risks include:

- Chatbots must inform users that they are interacting with AI.

- AI-generated content may need labeling.

Minimal Risk

The majority of AI applications fall under this category. They don’t control human behavior directly and are safe. So, organizations need to follow minimal obligations while implementing them.

Examples of such systems are:

- spam filters

- AI used in video games

- Recommendation engines for entertainment platforms

Why Risk Classification Must Be Mapped Into Requirements Early

For all operators of AI systems, it is recommended to determine in which category their application falls before any development starts. It helps in defining what the system must implement from the start. If this mapping is delayed, core design decisions are made without required controls.

For example, while developing AI-based hiring software (a high-risk AI system), if developers don’t implement traceability or focus on bias checking, they face legal issues later while launching the application.

Furthermore, documentation is also impacted based on classification. High-risk systems require structured technical files, test evidence, end-to-end traceability, version history, and logs of all changes and decisions made. Without early requirements mapping, teams struggle to produce consistent audit reports.

So, requirements teams should own this mapping. They must translate risk categories into clear system requirements, linked to controls, test cases, and documentation.

Documentation Obligations Under the EU AI Act

Under the EU AI Act, organizations building AI systems must maintain and update technical documents and keep them available for at least 10 years for national authorities. Here are some of the document obligations to follow according to the EU AI Act:

- AI compliance documentation for high-risk AI systems: It must describe the purpose of the system, architecture, model logic, data quality and sources, performance metrics, known limitations, and risk controls. These documents form the basis for regulatory review.

- Record-keeping and logging requirements: Teams must document different versions of the system, inputs, outputs, and system logs. Also, there must be end-to-end traceability for root cause analysis.

- Quality management system obligations: This documentation should include procedures for quality design, testing, and continuous improvement according to Article 17 of the EU AI Act.

- Post-market monitoring documentation: It requires documenting system performance, incidents, corrective actions, and maintaining updated risk assessments after deployment.

How the EU AI Act Changes Requirements Engineering Practices

The EU AI Act shifts requirements from policy statements to verifiable system specifications. It enforces teams to define what the system should do, how it will be validated and tested, and how results will be recorded.

Traditionally, requirements were just focused on system performance, features, and usability. But now teams must handle ethical, transparency, oversight, and traceability requirements, as well as work items.

There is a clear rise in non-functional requirements. Explainability, auditability, and robustness must be defined with measurable conditions, not general statements.

To adhere to the EU AI Act, requirements must be managed in such a way that they produce compliance evidence. Each requirement must link to development work items, logs, test cases, and validation results that can be used while preparing audit reports.

In practice, requirements engineering moves closer to governance and audit readiness, not just system design.

Try it Yourself

Ready to transform your DevOps with Copilot4DevOps?

Get a free trial today.